ID proponents sometimes compare the features of a system that is known to be designed by humans with those of a biological system supposedly “designed” by nature. The comparison is then used as evidence for the claim that if the biological system exhibits similar attributes of the human system, then a logical inference is that the biological system was intelligently designed. Neo-Darwinists cry foul by stating that since we

know the human systems are designed we cannot reasonably attribute their existence to chance. Besides, they will also counter, the human systems proposed as analogies also have many dissimilarities with the biological systems.

But is that really the case? Consider this latest news headline, as well as a couple of posts I previously published.

Per CNN.com,

Genetic barcodes will ID world's species,

A team of international scientists launched an ambitious project on Thursday to genetically identify, or provide a barcode for, every plant and animal species on the planet.

By taking a snippet of DNA from all the known species on Earth and linking them to photographs, descriptions and scientific information, the researchers plan to build the largest database of its kind.

From WordIQ.com, the

history of the barcode,

The idea for the barcode was developed by Norman Joseph Woodland and Bernard Silver. In 1948 they were graduate students at Drexel University. They developed the idea after hearing the president of a food sales company wishing to be able to automate the checkout process. One of their first ideas was to use Morse code printed out and extended verticaly, producing narrow and wide bars. Later, they switched to using a "bulls-eye" type barcode.

Let’s see, coding is applied to identifying items in a database. It’s quite interesting that a randomly generated, purposeless entity such as DNA could be utilized in such a manner.

----------

And then there’s…

Frozen Accidents (from September 1, 2004)

Back in May I wrote a post titled

Ultra-conservative DNA in which we see certain DNA sequences that supposedly evolved to a certain state (and, more importantly, function) and then stopped - or, froze - in place.

Per the August 3rd edition of

Reasons to Believe's webcast

Creation Update we hear of a study titled

Why nature chose A, C, G and U/T: An error-coding perspective of nucleotide alphabet composition. From the study's abstract,

The question of whether the size and make-up of the natural nucleotide alphabet is a consequence of selection pressure, or simply a frozen accident, is one of the fundamental questions of biology. Nucleotide replication is essentially an information transmission phenomenon, and so it seems reasonable to explore the issue from the perspective of theoretical computer science, and of error-coding theory in particular. In this analysis it is shown that the essential recognition features of nucleotides may be naturally expressed as 4-digit binary numbers, capturing the hydrogen acceptor/donor patterns (3-bits) and the purine/pyrimidine feature (1-bit). Optimal alphabets consist of nucleotides in which the purine/pyrimidine feature is related to the acceptor/donor pattern as a parity bit. Numerically interpreted, such alphabets correspond to parity check codes, simple but effective error-resistant structures. The natural alphabet appears to be an adaptation of one of two optimal solutions, constrained to its present size and composition by a combination of chemical and coding-theory factors. (emphasis added)

Given that the evolutionary paradigm posits natural selection as a blind and unguided process, it is no wonder that potential plateaus in the process are defined as

frozen accidents. It's also interesting that the process being addressed, that of

parity check codes, is that of intelligent action. Hardly an accident.

If nucleotide replication is essentially

information transfer, and if theoretical

computer science and

error-coding theory allow us to analyze the

parity check codes contained within the nucleotide

alphabets, what could be driving the conclusion that the entire process was driven by determinism and chance?

From the Christian's perspective, God created mankind in His image. One of the many implications of such a doctrine is that God has endowed mankind with creative ability inasmuch as God expresses His creative ability. The pre-existence of information, alphabets, parity check codes, and the like, should not be surprising in that one would expect the God of the Bible to express His creative ability in forms that mankind could not only recognize, but have the ability to develop as well.

----------

As well as…

On plans, templates, and similarities (from April 21, 2004)

Over at The Panda’s Thumb we see a

post that highlights a study done on limb loss in vertebrates. John Lynch states,

An interesting article in this week's edition of Nature suggests that at least in some fish, alterations in a single gene bring about evolutionary change in the form of limb (fin) loss.

Two follow-up posts on TPT, each by P. Z. Myers, can be found

here and

here. In the first follow-up P. Z. states,

Some of the complicating features of developmental genetics are pleiotropy and multigenic effects: that is, that the genes required to build an organism are all tangled together in an intricate web, with multiple genes required to properly assemble each character (that's the multigenic part), and each gene having multiple effects on multiple characters (that's pleiotropy). One might think of the organism as a house of cards, each card supporting all of the cards above it, so that tinkering with any one piece leads to catastrophic collapse. This isn't the case, of course. While developing systems are all elaborately interlocked, they also exhibit modularity and surprisingly robust flexibility.

In the second follow-up P. Z. states, with regards to the idea that the modularity and robust flexibility of a system could be used as evidence of design:

Quite the contrary, I see evidence of mechanisms that permit integrated evolution of organisms, with no designer required.

He provides more detail, via his own blog Pharyngula with,

Development. Evolution. Genes. Fish. What's not to like?. In it we see the following image:

Essentially, what we're hearing is that the integrated complexity found within the genetic structure of these species achieved its integrated complexity through blind chance because... well... they're

here aren't they? Isn't it amazing how nature has solved the problem of spitting out either limbs or fins? - all with the flip of a switch!

Yet imagine the power of templates. Imagine the efficiency in using a plan that allows for minor alterations that garner major changes. Imagine a set of instructions, a code - if you will, that allows one to step through a financial accounting program and, depending on the desired outcome, run a report of actual cost expenditures by region vs. running a report of revenue by project. Shucks, I don't have to imagine it at all - I ran a set of those reports today on a piece of software designed by semi-intelligent people.

On the morphological side, consider the skeletal and muscular structure of the human arm and hand.

Now note a robotic arm and hand that mimics the same functional capabilities as its human counterpart. As the website for the Shadow Robot Company states,

"The human hand has twenty-four powered movements. Shadow have implemented every single one, with all the power and range of movement, that the human hand has... The muscles in the upper arm and torso are analogous to the human's."

Have the robotic designers used the basic structural and morphological elements of a human arm and hand as a

guide for their design criteria?

How about a steam rotary engine? Let’s look at the schematics for such a device.

If we cross reference now with electrical rotors we find the following from

Penntex: a

rotor, and a

stator.

Cross referencing with pump rotors we find, at

Seepex pumps: a

universal joint

Common sense tells us that there are similarities in these various human designs because they are all working off the same basic template (i.e., rotary motor design). Variances within the details are due to varying specific parameters with regards to design criteria as well as to function, materials, power supply, etc.

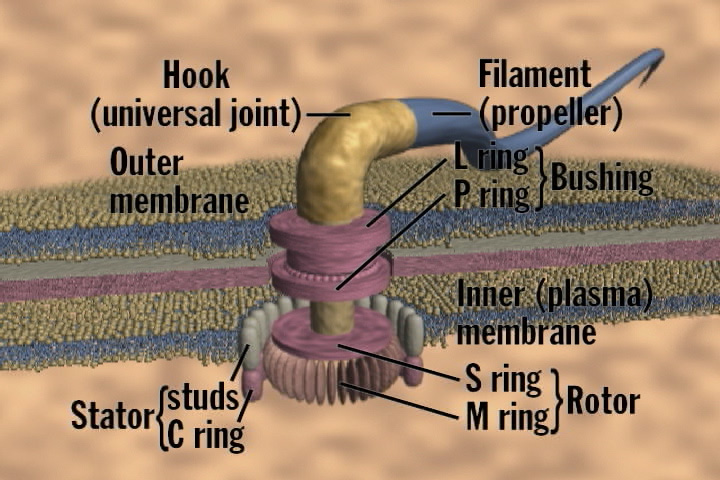

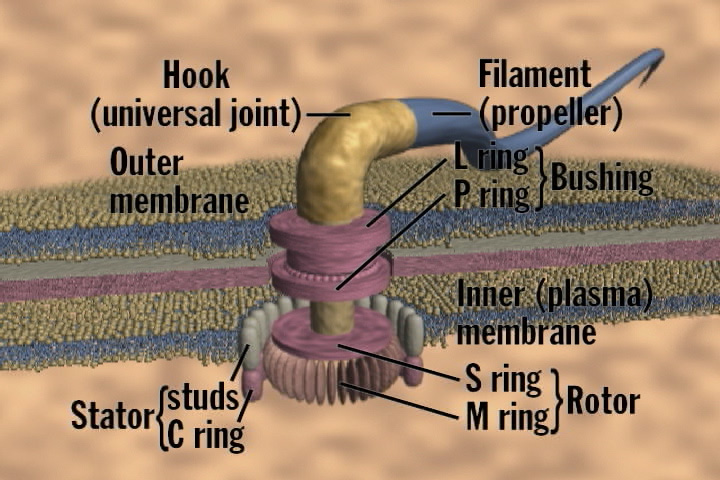

Now compare the human artifacts with a flagellar motor per the

NCBI and

ARN websites:

,

How interesting that the components of the flagellum precisely match up with the

designed components of rotary motors.

Additionally, note that the work in robotics, as well as the development of the rotary motors, did not occur through blind, random chance, but through intentional, rationalistic thought processes – i.e., through design.

Essentially, what we're hearing is that the integrated complexity found within the genetic structure of these species achieved its integrated complexity through blind chance because... well... they're here aren't they? Isn't it amazing how nature has solved the problem of spitting out either limbs or fins? - all with the flip of a switch!

Yet imagine the power of templates. Imagine the efficiency in using a plan that allows for minor alterations that garner major changes. Imagine a set of instructions, a code - if you will, that allows one to step through a financial accounting program and, depending on the desired outcome, run a report of actual cost expenditures by region vs. running a report of revenue by project. Shucks, I don't have to imagine it at all - I ran a set of those reports today on a piece of software designed by semi-intelligent people.

On the morphological side, consider the skeletal and muscular structure of the human arm and hand.

Essentially, what we're hearing is that the integrated complexity found within the genetic structure of these species achieved its integrated complexity through blind chance because... well... they're here aren't they? Isn't it amazing how nature has solved the problem of spitting out either limbs or fins? - all with the flip of a switch!

Yet imagine the power of templates. Imagine the efficiency in using a plan that allows for minor alterations that garner major changes. Imagine a set of instructions, a code - if you will, that allows one to step through a financial accounting program and, depending on the desired outcome, run a report of actual cost expenditures by region vs. running a report of revenue by project. Shucks, I don't have to imagine it at all - I ran a set of those reports today on a piece of software designed by semi-intelligent people.

On the morphological side, consider the skeletal and muscular structure of the human arm and hand.

Now note a robotic arm and hand that mimics the same functional capabilities as its human counterpart. As the website for the Shadow Robot Company states, "The human hand has twenty-four powered movements. Shadow have implemented every single one, with all the power and range of movement, that the human hand has... The muscles in the upper arm and torso are analogous to the human's."

Now note a robotic arm and hand that mimics the same functional capabilities as its human counterpart. As the website for the Shadow Robot Company states, "The human hand has twenty-four powered movements. Shadow have implemented every single one, with all the power and range of movement, that the human hand has... The muscles in the upper arm and torso are analogous to the human's."

Have the robotic designers used the basic structural and morphological elements of a human arm and hand as a guide for their design criteria?

How about a steam rotary engine? Let’s look at the schematics for such a device.

Have the robotic designers used the basic structural and morphological elements of a human arm and hand as a guide for their design criteria?

How about a steam rotary engine? Let’s look at the schematics for such a device.

If we cross reference now with electrical rotors we find the following from Penntex: a rotor, and a stator.

If we cross reference now with electrical rotors we find the following from Penntex: a rotor, and a stator.

Cross referencing with pump rotors we find, at Seepex pumps: a universal joint

Cross referencing with pump rotors we find, at Seepex pumps: a universal joint

Common sense tells us that there are similarities in these various human designs because they are all working off the same basic template (i.e., rotary motor design). Variances within the details are due to varying specific parameters with regards to design criteria as well as to function, materials, power supply, etc.

Now compare the human artifacts with a flagellar motor per the NCBI and ARN websites:

Common sense tells us that there are similarities in these various human designs because they are all working off the same basic template (i.e., rotary motor design). Variances within the details are due to varying specific parameters with regards to design criteria as well as to function, materials, power supply, etc.

Now compare the human artifacts with a flagellar motor per the NCBI and ARN websites:

,

,  How interesting that the components of the flagellum precisely match up with the designed components of rotary motors.

Additionally, note that the work in robotics, as well as the development of the rotary motors, did not occur through blind, random chance, but through intentional, rationalistic thought processes – i.e., through design.

How interesting that the components of the flagellum precisely match up with the designed components of rotary motors.

Additionally, note that the work in robotics, as well as the development of the rotary motors, did not occur through blind, random chance, but through intentional, rationalistic thought processes – i.e., through design.

14 comments:

Dark,

You've been reading my blog long enough to know:

- I believe that God created the natural order and is sovereign over it, therefore, His actions only appear supernatural from our point of view. IOW, He can operate both naturally and supernaturally.

- The argument of my post was illustrating how human templates have preexisting counterparts in the natural order.

- That I've directed you to a group of scientists who are busy making testable predictions for the Creation Model.

- That complexity alone is not the issue.

- That I believe Chaos Theory is inadequate with regards to describing how a wolf-like creature (or hippo? which is it?) evolved into a whale in the brief time span of 10 million years.

- And that the idea of iterations is useless unless it can be directly tied to demonstrating macro-evolutionary change on the scale of, that lovely example, a wolf-like creature to a whale.

Yeah Dark, evolution is IT only when you ignore the obvious.

Common descent explains nothing buddy. You impose your idea on the data and then claim the data explains your idea (just reference about any book on evo - or refer to Berra's Blunder for a gross example). It's circular reasoning - unwarranted extrapolations based on evolutionary lensing, over and over again.

But if you still need more evidence, then simply wait. Research goes on. And research has pretty much eroded away the idea of "junk-DNA." ERVs are next too (use that as a prediction) - they will fall by the wayside because of complex function... and fail to herald common ancestry.

Before you show your hand, though, you might want to see how well your hand explains why 99% of all humanity believe in magical nonsense of some sort? If nature is all there is, why care?

Before you show your hand, though, you might want to see how well your hand explains why 99% of all humanity believe in magical nonsense of some sort? If nature is all there is, why care?

That's nothing more than making an excuse for playing the pretend "make believe in magic" game. It tries to prove nothing other than it might be better to believe in the magical nonsense even if it does not exist. Besides, in the case of your particular religion, it's more about being scared out of their wits that they'll end up in the "double toothpicks" than it is about the "caring."

Anyways, Berra's so-called blunder is yet another example of an analogy that doesn't hold water when subjected to a trivial bit of scrutiny, despite whatever Johnson might want to pretend in his little baby fantasy world. "Nya nya, you said evolution, ha ha, one two three no takebacks, wah wah."

Rusty,

You cling to the idea that evolution is random. It is anything but random.

You misrepresent so many basic facts that one begins to wonder whether you can be objective about anything science related.

Whats funny is the 'group of scientists' making testable predictions using the creation model, absolutely fraking hilarious.

Then you go and call evolutionary theory circular, as if a better, more consise theory exists without resorting to God did it. Of course how do you know this? The bible says it, why does that matter becuase God wrote the bible. You seem unable but more likely unwilling to see the difference between a good solid theory-evolution and a religious fantasy-ID/creationism.

and this is the height of stupidity-'Before you show your hand, though, you might want to see how well your hand explains why 99% of all humanity believe in magical nonsense of some sort? If nature is all there is, why care'

What exactly does that prove? Millions thought the Earth was flat. Of that 99% you stupidly toss about exist 1000's of Gods. Your arrogance in assuming you have the correct one is putrid. To think that you and your ilk are correct and the rest of the planet who thinks your full of it are wrong is astounding arrogance.

And you silly little man, If nature is all there is, and is all we have IT MAKES IT MORE VALUABLE not less. It makes each living thing more vital, every life more meaningful.

If other fantasy realms exist who cares what happens to this one.

Rusty,

You cling to the idea that evolution is random. It is anything but random.

You misrepresent so many basic facts that one begins to wonder whether you can be objective about anything science related.

Whats funny is the 'group of scientists' making testable predictions using the creation model, absolutely fraking hilarious.

Then you go and call evolutionary theory circular, as if a better, more consise theory exists without resorting to God did it. Of course how do you know this? The bible says it, why does that matter becuase God wrote the bible. You seem unable but more likely unwilling to see the difference between a good solid theory-evolution and a religious fantasy-ID/creationism.

and this is the height of stupidity-'Before you show your hand, though, you might want to see how well your hand explains why 99% of all humanity believe in magical nonsense of some sort? If nature is all there is, why care'

What exactly does that prove? Millions thought the Earth was flat. Of that 99% you stupidly toss about exist 1000's of Gods. Your arrogance in assuming you have the correct one is putrid. To think that you and your ilk are correct and the rest of the planet who thinks your full of it are wrong is astounding arrogance.

And you silly little man, If nature is all there is, and is all we have IT MAKES IT MORE VALUABLE not less. It makes each living thing more vital, every life more meaningful.

If other fantasy realms exist who cares what happens to this one.

While I permit Anonymous comments, I usually don’t respond to one where the commenter hasn’t at least typed in their name or handle. But I’ll make an exception here (especially when the writing style is so DS’esque).

When I speak of evolution being random I do not mean that there is no pattern or conformity to physical laws. What I mean by using the word random is that there is no specificity due to an intelligence overseeing and guiding the events at hand. Waves crashing on the seashore do not create random markings but do indeed create a recognizable pattern on the sand. However, the patterns, while conforming to the laws of physics, are random in the sense that there is no specificity to them.

I’ve not misrepresented the fact that neo-Darwinists view the sequence of life on Earth as nothing more than a series of undirected coincidences. I’ve also not misrepresented the bias found in many neo-Darwinists when their bluff is called with regards to allowing ID discussions in science forums or in considering testable creation models to be analyzed (as your comment so vividly illustrates).

Whether or not the God theory is correct does not diminish the fact that if the evolutionary theory is circular, it should be discarded. The neo-Darwinist wants everyone else to submit to their rules, but is completely unwilling to admit that they must submit to the rules as well --- in other words, empirical testability is deemed the determining factor (especially when considering if God wrote the Bible), but where is the empirical test that proves empirical testability is valid? At least be honest enough to admit that your worldview foundation rests on… faith.

Maybe my comment about 99% of humanity believing in the spiritual was a bit too obvious for you. It doesn’t prove that Christianity is the correct worldview, but it does illustrate that humans have a propensity for spirit worship (despite being educated to, for instance, know that the world isn’t flat). The evolutionary paradigm can say nothing about such a desire – except for nonsense about belief systems that somehow just arise (through evolutionary means, of course). That’s the point – the naturalism only worldview is utterly incapable of explaining the abstract except in terms of “because” (unless you count the circular explanations which basically state, “we know the abstract came about through evolution because we experience the abstract”).

Logic is not your strong point, is it? You claim that I am arrogant for thinking that Christianity is correct and all other worldviews incorrect? What does that make you, then, if you consider me wrong and yourself right? What does that make you if you, attempting to be magnanimous, claim that no one can know which view is “right,” so all views must be permissible? How do you then deal with my view explicitly stating that your view is wrong? Do you “tolerate” my view or not? Face it buddy, you’re not only in a room with no windows and doors, but you’ve painted yourself into a corner as well. Either you’re a hypocrite for accusing me of arrogance, or you’ve got to admit that there must be a correct worldview out there.

You claim that if nature is all there is then it makes this life even more valuable? Okay, bow down to your god of Pragmatic Nihilism… but just remember that, based on your worldview, when you die – that’s it. So there is nothing to stop someone from placing value on attaining virtually any and every pleasure he desires, regardless of how it impacts other members of our sorry species.

P.S. you also ignored virtually everything my post was about.

You claim that if nature is all there is then it makes this life even more valuable? Okay, bow down to your god of Pragmatic Nihilism… but just remember that, based on your worldview, when you die – that’s it. So there is nothing to stop someone from placing value on attaining virtually any and every pleasure he desires, regardless of how it impacts other members of our sorry species.

That's only an argument about why it might be a good idea to pretend in your God who drowns the "sorry species", whether he exists or not. You can't do better, because you don't have any evidence. Oh, big surprise that is.

Some people question the existence of something that remains invisible and silent, never says a word about the whole question, has us determining its "truth" from a medium that is prone to all sorts of mistakes, i. e. an old hand-me-down book compiled from oral traditions, when he could do a whole lot better, but won't. And these people who question that are the irrational Nihilists, huh. You won't even give them credit for having legitimate reasons for doubting this stuff, and they are the ones who are arrogant, eh.

Allow me to once again translate all that to what it might sound like to the people who have their minds in lockdown mode: "Blah blah blah, blah, yadda yadda, blah."

386,

Why can't you directly address what I have to say? You certainly have the right to reject the Christian God, but what does that have to do with whether or not Pragmatic Nihilism works? BTW, I don't recall referring to your worldview as "irrational Nihilism" but "Pragmatic Nihilism." Nor do I recall calling that view arrogant, but simply pointing out that anyone who claims to any form of tolerant pluralism is a hypocrite whenever he denounces an opposing view.

I spent my undergrad career studying chaos, and I'm focusing on mathematical evolutionary modeling for my graduate studies (PhD advisors: Joe Felsenstein in genome sciences, Mark Kot in applied mathematics.) My wife has her masters in mathematics, and has studied ID for about 3 years (at the scholarly level, not the popular level) so I'm pretty aware of the current state of the theory. We're also solid, committed Christians with active ministry, especially in teaching classes on Biblical Interpretation. All this is to say, I'm pretty qualified to speak here.

DS is right that chaos generally involves simple systems with complex behavior (and it's easier to get such behavior out of discrete "iterative" systems than continuous systems). But, his point was that simple systems lead to complex systems -- and that's not supported by his analogy to chaos, where simple systems lead to complex behavior. The "well-known fact" that complex systems arise from simple systems is not particularly well-established outside of the area of study in question.

Now, that said... over the past few years, I've really warmed up to evolutionary theory. It's still got some problems and some serious gaps (especially surrounding speciation/cladogenesis), but it's also got some really good insights. It shouldn't be dismissed lightly. There are some good models that show complex systems arising from simple systems, and while they don't fully establish what evolutionary apologists say they do, they're not to be taken lightly.

Far too often, I see people cite "intelligent design" as if it's some great competitor to evolutionary theory. It's not, really -- it's a great competitor to philosophical naturalism, but it can be used in ways that are compatible with evolutionary theory. Those who use it as a strong competitor to evolutionary theory, right now, are misusing it (yes, even Dembski) -- the machinery isn't yet in place for it to be used for a project of that magnitude. It's still a baby science that can only be used in an intuitive sense right now, and it needs to be developed a lot more on known problems (like signal analysis) before it'll be robust enough to use on unknown problems (like origins).

Also, I wouldn't hold the partial circularity of evolutionary theory against it. You assume a theory, and then you notice that the data does in fact work with your theory, and when it doesn't, you refine the theory. That's not so much "circular" as "spiral" -- you start out with a weak assumption, and then you strengthen it as time goes on and the evidence mounts. This is the same sort of reasoning I employ when reading the Bible and praying -- begin with the weak theory that the Bible was intended to make sense and that God might exist, and read and pray, and as evidence mounts I become more and more convinced of Jesus' divinity. Similarly, with evolution, they assume the spectrum of life comes from natural processes, and then they take data and uncover natural processes that lead to some of the patterns they see in the data, and when they don't they refine the theory, and when they do the theory is strengthened. It's not so bad a system.

Lotharbot,

Your insightful comments are always welcome.

You are correct when you speak of distinguishing between complex patterns and complex systems. I'm not so sure that the average neo-Darwinist recognizes the difference (as evidenced by my continually having to respond, "it's not just complexity" or, "it's not just low probability").

Even though I attack the evolutionary paradigm I would agree that there are certain aspects of it that are very valid. I think Michael Denton has a chapter on that in his book Evolution: a Theory in Crisis, but it's been a while since I've read it.

I think you bring up a critical aspect of this whole issue by highlighting the fact that ID most directly effects philosophical naturalism. But I also think that the manner in which it effects PN prevents it from replacing PN (while keeping evo). Make sense? My argument is that once you buy the evo contract, you're stuck with the whole package - which includes PN, which leads to atheistic naturalism. There are plenty of people who disagree with me on that... which is quite evident from the history of posts on this blog.

In claiming circular reasoning I am not talking about how a process, like the scientific method, operates. I realize that we operate under premises and then test data, refine conclusions, re-test, etc.

Circularity in thinking can sometimes be difficult to demonstrate. I think part of the difficulty lies in how we view the aspect of bias. I've argued in the past that neo-Darwinists display enormous bias in their writings. Responses have been made that point out that I have bias in my writings. Well... yes, of course I do! I'm not saying that having bias is wrong, but that it occurs. I don't fault anyone for having bias, but only for presenting conclusions as if they have no bias. So when someone gives the fossil record, showing homology, as evidence of common descent, aren't they putting the cart before the horse? The mechanism of modification and natural selection is supposed to produce changes at the level of speciation. Where's the evidence? Well, the fossil record. But the fossil record was the very thing we were supposed to be explaining. And now it's being used as evidence for the mechanism that explains the phenomenon found in the fossil record which is evidence for the mechanism that explains the phenomenon found in the fossil record which is evidence for the mechanism that explains...

"My argument is that once you buy the evo contract, you're stuck with the whole package - which includes PN, which leads to atheistic naturalism. There are plenty of people who disagree with me on that..."I'd be one of them.

Of course, I'm also one of those who says you're not buying the "evo contract" -- you're just buying individual premises and their implications. It may happen that you end up buying most, if not all, of evolution's premises (or you may buy very few of them), but you need not buy the PN/atheistic worldview that is often associated with it.

You could make the same argument with any theory -- "once you buy gravity, you're stuck with atheism" -- but it just doesn't make sense. Buying a particular natural theory in no way forces you to change your epistomological underpinnings, unless you happen to be a strong literalist... and if you're a strong literalist, we need to have a chat on hermeneutics.

"In claiming circular reasoning I am not talking about how a process, like the scientific method, operates.... when someone gives the fossil record, showing homology, as evidence of common descent, aren't they putting the cart before the horse?"Most evolutionists would agree with you -- high-school biology teaches homology as evidence for evolution, but that's really a misuse of it. It's better to think of the fossil record as the inspiration for the theory than as evidence for it. Long ago, various people (such as Darwin) noticed physical similarities between species and proposed the theory that they shared common ancestry as a possible explanation for those similarities. To use the similarities as evidence for the theory is circular, and anybody who does so should not be taken seriously.

Most modern evolutionists (at least the ones I've talked to) don't use the fossil record as "evidence", unless they're building a cumulative case along with other evidence (in which case, they want to aggregate all of the facts that are consistant with the theory.) Instead, they'll treat homology as a rough guide, and they'll use that to give them a starting point for DNA research. Often, the physical structure matches up with the DNA, but on occasion it doesn't. Most evolutionists will be very skeptical of any claims that rely entirely on homology, because they recognize your criticism.

"The mechanism of modification and natural selection is supposed to produce changes at the level of speciation. Where's the evidence?"There are some "toy" experiments that have been done in labs that show something like cladogenesis and speciation. These don't rely on homology or fossil records at all -- they're all on the reproductive isolation / DNA sequence level. So far, they're not very good experiments, and they only show pieces of what they'd really need to show, but they're still pretty interesting. I've spent the past couple years hoping to find better experiments, so far to no avail, but I'm not going to rub the evolutionists' faces in it just yet. Origins, no matter what theory you subscribe to, is such a massive undertaking to analyze that a heavy dose of humility and patience is required.

LotharBot,

Certainly there are those that adhere to the evolutionary paradigm without adhering to PN/AN, but I argue that they are not being logically consistent.

I would argue that gravitational theory differs from evolutionary theory in that their predictions are vastly different in terms of testability. Virtually any theory in physics is, by the qualities of the discipline, “simpler” than that of a “complex” science such as biology. And in physics we’re dealing with watching how matter follows the laws of physics. There is no “evolution” in the same sense as biological evolution. Sure, the history of stars shows an “evolution” in terms of “change,” but such an “evolution” is no different than, say, chestnuts roasting by the fire (and Jack Frost nipping at your nose). In other words, while the physicist can show that the laws of physics act in a certain manner, the evolutionary biologist cannot similarly demonstrate that the evolutionary process can produce species. The problem (for the atheist) with cosmology is that the number crunching is much more justifiable than with neo-Darwinism. As such, we’re left with discovering incredible fine-tuning in the makeup of our universe. Either some higher being “monkeyed” with the settings, or we’re just super, super, super, super, super, super, super lucky. There is no wide-open doorway into atheism as there is with neo-Darwinism – as it is typically defined.

I think you’d be surprised at how many evolutionists use homology as evidence for evolution. At the very least, they rely on it as backup for the idea of common descent (and, at the very worst, consider it a given and use it to develop cladograms). Now while it certainly could be evidence for common descent, it could just as easily be evidence for design templates. Yet that proposition is never allowed. Have you ever compared the differences between structural, functional, and genetic homologies? You should contact Fazale Rana at Reasons to Believe (www.reasons.org). I think you’d be interested in what he’s doing and he’d be interested to hear your comments.

"Certainly there are those that adhere to the evolutionary paradigm without adhering to PN/AN, but I argue that they are not being logically consistent."Well, I have to disagree with you there... rather than muddle the waters in the comments here, might I suggest this as a full-blown topic for future discussion?

"I would argue that gravitational theory differs from evolutionary theory in that their predictions are vastly different in terms of testability.... while the physicist can show that the laws of physics act in a certain manner, the evolutionary biologist cannot similarly demonstrate that the evolutionary process can produce species."I'll skip the commentary on the definition of the word "evolution" vs. the common usage.

In terms of being able to demonstrate processes:

- biologists will never be able to prove conclusively that those processes produced species in the past. But, physicists also can't prove conclusively that gravity worked the same in the past as it does now. That's not terribly disturbing to anyone.

- in principle, biologists *could* demonstrate speciation. My area of research is in mathematical modeling of speciation, because that's where I think the most interesting questions (at the moment) are. It's certainly possible that, in the future, speciation could be modeled or simulated or even observed in a lab.

One thing I caution people about a lot when dealing with these questions: whether it's ID or evolution, be careful not to put too much faith in the idea that a particular theory CAN'T be tested or CAN'T be demonstrated in a particular way. We're always gaining insight, and perhaps in the near future someone will be able to do something that another person has said can't happen, and staked their faith on it. I've seen far too many people stake their faith on a claim that "evolutionists can't analyze _______" and then watched them crumble when evolutionists manage to analyze that exact thing.

"The problem (for the atheist) with cosmology is that the number crunching is much more justifiable than with neo-Darwinism. As such, we’re left with discovering incredible fine-tuning in the makeup of our universe."I'm glad to see you at least separate the concepts on this level. The tuning of the universe and the truth or falsehood of evolutionary theory aren't particularly related, and it's disturbing when people retreat into one in order to avoid discussing the other.

Though, while we're on the subject... do you know of anyone who has actually done a rigorous stability analysis or any sort of bifurcation theory on the universe? I see people cite numbers about how improbable life is in this sort of discussion all the time, but I have yet to see any of these numbers generated in a way that shows mathematical competence.

"I think you’d be surprised at how many evolutionists use homology ... as backup for the idea of common descent."Well... I know of many who use it in various contexts. It's often used in support of the general theory, and often used as a rough heuristic for forming phylogenies.

From the way you describe it, though, it sounds like you're interacting more with evolutionary apologists than actual evolutionists. It wouldn't surprise me if they overuse it...

"Have you ever compared the differences between structural, functional, and genetic homologies?"As Joe Felsenstein said (paraphrased):

We make a tree using structural similarities, and then we make a tree using DNA, and we're like "oh look, they're the same." Well, yes, in the sense that they're both trees.It really all has to come down to the DNA. All this other stuff is just fluff -- it might inspire DNA research, but the core of evolution is changes in DNA so that's where the true analysis must be done. Most of the use of homology I've seen has been either rough first-cut guidance for real science, or apologetics.

If you could... remind me to contact Fazale Rana in about a month. I have conferences coming up that I should be preparing for, and I can't afford to put another serious project on my plate right now.

Rusty,

I enjoyed your argument, especially the flagellum comparison pictures. It's also interesting that your critics avoided your main argument and attacked symantics. The flagellum is a chemical-mechanical machine whose discovery evolution would never have predicted. Natural selection (in any of its various forms) cannot explain it. By analogy, the only structures that look like it are designed. Who's making the bigger leap?

Post a Comment